Confidence, Anxiety, and Identity in the Age of Hyper-Fluent Correction

1. The Missing Variable in AI Feedback Discourse

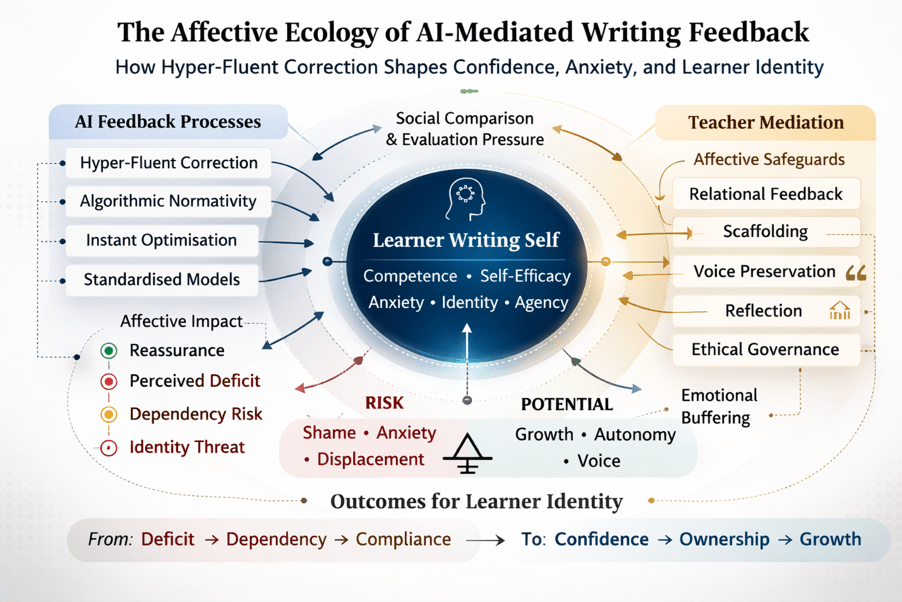

The rapid integration of generative artificial intelligence (GenAI), particularly large language models (LLMs), into L2 writing classrooms has generated sustained debate around accuracy, validity, authorship, and assessment integrity. Yet one dimension remains comparatively under-theorised: the affective consequences of AI-mediated feedback.

Writing is not merely a cognitive activity. It is an identity-laden, evaluative, and emotionally saturated practice. For L2 writers especially, writing frequently intersects with vulnerability, self-perception, and legitimacy as language users. When feedback is delivered not by a human interlocutor but by an algorithm capable of producing instant, hyper-fluent revisions, the affective ecology of writing shifts.

Recent policy and research discussions acknowledge that generative AI systems reshape educational participation in ways that extend beyond efficiency (UNESCO, 2023; OECD, 2024). However, little systematic attention has been paid to how algorithmic feedback influences learner confidence, anxiety, dependency patterns, and writer identity. This post argues that GenAI feedback does not simply alter revision processes; it recalibrates the emotional architecture of writing development.

2. Hyper-Fluent Correction and the Psychology of Comparison

One of the defining features of LLM-generated feedback is its fluency. Corrections are not merely accurate; they are stylistically refined, rhetorically coherent, and often lexically sophisticated. While this may appear pedagogically advantageous, it introduces a powerful comparative dynamic.

Social comparison theory suggests that individuals evaluate their competence relative to salient standards (Festinger, 1954). In AI-mediated writing contexts, the model’s output becomes an implicit benchmark of linguistic ideality. For L2 learners—particularly in EFL contexts where linguistic insecurity may already be present—this can produce two divergent affective responses:

- Reassurance and scaffolding, when feedback is perceived as supportive.

- Deflation and inadequacy, when AI revisions highlight perceived linguistic deficits.

Research on feedback affect demonstrates that emotional responses significantly shape uptake and long-term learning (Pitt & Norton, 2017). Moreover, Carless and Boud (2018) emphasise that feedback literacy involves managing affective reactions to evaluative information. When AI feedback is linguistically superior to learners’ production in ways that appear effortless, it risks amplifying negative self-assessment rather than strengthening competence.

The critical issue is not correction itself, but the affective framing of correction. Hyper-fluent AI outputs can inadvertently position learners as perpetually deficient relative to an unreachable standard.

3. Shame, Dependency, or Reassurance? Divergent Affective Pathways

AI feedback does not produce uniform emotional outcomes. Instead, it may generate three primary affective trajectories.

3.1 Reassurance and Reduced Anxiety

In some contexts, learners report lower writing anxiety when interacting with AI tools, particularly when the feedback environment feels non-judgmental (Wang, 2024). The absence of human evaluation may reduce fear of embarrassment, especially in cultures where public error carries social cost.

This aligns with research suggesting that writing anxiety is strongly influenced by evaluative pressure (Cheng, 2004). When AI feedback is framed as iterative and exploratory rather than summative, it may create psychological safety that encourages experimentation.

3.2 Dependency and Cognitive Offloading

However, emerging research also warns of over-reliance and passive dependence (Kasneci et al., 2023). From a self-determination perspective (Deci & Ryan, 2000), autonomy is central to intrinsic motivation. If learners begin to outsource revision decisions reflexively, the perceived locus of competence shifts from self to system.

Over time, this may weaken self-efficacy—defined as belief in one’s capacity to perform tasks independently (Bandura, 1997). When linguistic improvement becomes externally generated rather than internally constructed, learners risk conflating polished output with personal growth.

3.3 Shame and Identity Threat

Perhaps the most under-examined dimension concerns identity. L2 writing is closely tied to legitimacy as a language user. Norton (2013) emphasises that language learning involves investment in identity and symbolic capital. If AI consistently “improves” a learner’s voice in ways that erase stylistic individuality or amplify deficit perceptions, learners may experience subtle identity displacement.

This is particularly acute in multilingual contexts where rhetorical variation is culturally grounded. Algorithmic standardisation may unintentionally reinforce Anglocentric norms (UNESCO, 2023), thereby positioning certain forms of expression as inferior.

Thus, AI feedback may not only correct language; it may reshape how learners perceive their right to speak.

4. Teacher–Student Relational Displacement

Feedback is traditionally relational. It conveys not only evaluation but care, expectations, and pedagogical presence (Hyland & Hyland, 2006). When feedback becomes algorithmically mediated, the relational dimension shifts.

Selwyn, Ljungqvist, and Sonesson (2025) argue that generative AI introduces “pedagogical frailties,” including displacement of professional judgement and relational labour. Teachers are not merely information providers; they interpret learner histories, calibrate tone, and mediate affective responses.

When AI feedback becomes primary, three risks emerge:

- Diminished relational feedback: Learners may receive technically accurate but emotionally neutral input.

- Invisible teacher labour: Teachers increasingly curate, verify, and contextualise AI output.

- Authority redistribution: Epistemic authority may migrate toward algorithmic fluency.

This does not imply that AI removes teachers. Rather, it intensifies the need for affective mediation. Teachers must interpret how learners emotionally experience AI feedback and restore relational balance where necessary.

5. Rethinking Feedback Literacy as Affective–Epistemic Competence

Traditional conceptualisations of feedback literacy have foregrounded learners’ capacity to make evaluative judgements and take informed action in response to feedback (Carless & Boud, 2018). While this remains foundational, AI-mediated writing environments necessitate an important expansion of this construct. Under GenAI conditions, feedback literacy must evolve into an affective–epistemic competence that integrates cognitive evaluation with emotional discernment. Learners are no longer engaging solely with human commentary shaped by relational cues and pedagogical intent; they are interacting with hyper-fluent, algorithmically generated suggestions that may implicitly recalibrate their perceptions of competence and voice. Consequently, learners must develop the capacity to recognise and regulate their emotional reactions to AI corrections, to distinguish between genuine textual improvement and subtle forms of identity displacement, to evaluate suggestions critically without internalising deficit narratives, and to preserve authorship agency even when confronted with stylistically superior alternatives.

This expanded literacy aligns closely with UNESCO’s (2023) call for human-centred AI integration that safeguards learner autonomy and critical capacity rather than encouraging passive technological dependence. In practical pedagogical terms, cultivating such competence requires deliberate instructional design. Structured reflection on emotional responses to AI feedback can help learners externalise and examine affective reactions that might otherwise remain implicit. Revision memos that require students to justify why specific suggestions were accepted or rejected transform automated feedback into an object of metacognitive scrutiny. Explicit discussion of voice preservation foregrounds the importance of rhetorical identity in multilingual writing, while teacher-mediated conversations about confidence, growth, and independence reinforce relational scaffolding within AI-supported environments. Absent these structures, AI feedback risks becoming affectively opaque—technically efficient yet emotionally unexamined—thereby undermining the very autonomy and developmental agency that feedback is intended to support.

6. Toward an Affective Pedagogy of AI-Mediated Writing

If generative AI is to remain pedagogically defensible within L2 writing classrooms, its integration must be conceptualised not solely as a cognitive support mechanism but as an intervention within a deeply affective learning space. Writing development is inseparable from confidence, vulnerability, identity negotiation, and perceived legitimacy as a language user. Any pedagogical framework that incorporates AI-generated feedback must therefore account for the emotional consequences of hyper-fluent correction alongside its linguistic affordances. The question is no longer whether AI can improve textual quality, but whether its presence sustains or destabilises the psychological conditions necessary for long-term writer development.

An affective pedagogy of AI-mediated writing begins by reframing the status of AI within the classroom. Rather than positioning generative output as an ideal linguistic benchmark, AI must be presented as provisional support—an exploratory resource whose suggestions remain open to interpretation, adaptation, and rejection. This reframing is critical in preventing learners from equating algorithmic fluency with unattainable linguistic perfection. When AI becomes an aspirational standard rather than a negotiable interlocutor, learners risk internalising deficit narratives that undermine self-efficacy.

Equally important is the preservation of productive struggle as a constitutive element of competence formation. Language development depends on effortful formulation, noticing, revision, and self-correction. If AI systems prematurely resolve these cognitive tensions, the developmental value of writing tasks may be diminished. An affective pedagogy does not seek to eliminate difficulty but to scaffold it, ensuring that learners experience challenge as growth-oriented rather than humiliating or overwhelming. Maintaining this balance protects both autonomy and resilience.

Furthermore, the protection of learner voice must be treated as a central concern. Algorithmic models frequently reproduce standardised rhetorical norms that may inadvertently homogenise stylistic variation. In multilingual and intercultural writing contexts, such standardisation risks marginalising alternative discursive identities. An affectively informed pedagogy therefore requires explicit attention to voice preservation, encouraging learners to interrogate whether AI suggestions clarify expression or subtly reshape authorial stance. Writing instruction must reinforce that intelligibility does not necessitate identity erasure.

The teacher’s relational presence also remains indispensable within this framework. Feedback has traditionally functioned as a site of interpersonal recognition, conveying not only evaluation but care, encouragement, and contextualised guidance. When AI systems mediate revision, teachers must actively reassert this relational dimension through discussion, interpretive framing, and emotional calibration. Pedagogical authority is not displaced by AI; rather, it becomes more crucial as a stabilising force that contextualises algorithmic intervention within human developmental trajectories.

Finally, an affective pedagogy requires longitudinal attentiveness to patterns of dependency. While short-term reliance on AI assistance may reduce anxiety or increase perceived fluency, sustained over-delegation can weaken self-trust and internalised competence. Monitoring how learners interact with AI over time—whether they increasingly justify decisions independently or defer reflexively to machine output—becomes part of responsible instructional practice.

The objective of such a pedagogy is not to diminish technological sophistication or resist innovation. Rather, it is to prevent affective erosion. Writing development depends not only on the precision of corrections but on sustained belief in one’s capacity to improve. If AI-mediated feedback enhances textual polish while undermining writer confidence or identity ownership, its pedagogical value becomes compromised. The long-term integrity of L2 writing education therefore rests on ensuring that generative systems support not only accuracy, but emotional sustainability and enduring authorial agency.

7. Conclusion: Beyond Accuracy Toward Emotional Sustainability

The dominant discourse surrounding AI feedback often centres on efficiency, scalability, and textual quality. Yet writing is fundamentally affective work. Confidence, anxiety, legitimacy, and identity are not peripheral variables; they are constitutive of development.

Hyper-fluent AI correction may reassure, deflate, empower, or displace. Its impact depends not solely on algorithmic design, but on pedagogical mediation. In the absence of affective structuring, generative feedback risks cultivating compliance without confidence, fluency without ownership, and improvement without identity.

The future of AI-mediated writing pedagogy therefore hinges on a simple but under-acknowledged truth: learners do not only need accurate feedback. They need emotionally sustainable feedback.

References

Bandura, A. (1997). Self-efficacy: The exercise of control. W. H. Freeman.

Carless, D., & Boud, D. (2018). The development of student feedback literacy: Enabling uptake of feedback. Assessment & Evaluation in Higher Education, 43(8), 1315–1325.

Cheng, Y. S. (2004). A measure of second language writing anxiety: Scale development and preliminary validation. Journal of Second Language Writing, 13(4), 313–335.

Deci, E. L., & Ryan, R. M. (2000). The “what” and “why” of goal pursuits: Human needs and the self-determination of behavior. Psychological Inquiry, 11(4), 227–268.

Festinger, L. (1954). A theory of social comparison processes. Human Relations, 7(2), 117–140.

Hyland, K., & Hyland, F. (2006). Feedback on second language students’ writing. Language Teaching, 39(2), 83–101.

Kasneci, E., Sessler, K., Küchemann, S., Bannert, M., Dementieva, D., Fischer, F., et al. (2023). ChatGPT for good? On opportunities and challenges of large language models for education. Learning and Individual Differences, 103, 102274.

Norton, B. (2013). Identity and language learning: Extending the conversation (2nd ed.). Multilingual Matters.

OECD. (2024). Digital education outlook 2024: Towards an AI-enabled education system. OECD Publishing.

Selwyn, N., Ljungqvist, M., & Sonesson, A. (2025). When the prompting stops: Exploring teachers’ work around the educational frailties of generative AI tools. Learning, Media and Technology. Advance online publication.

UNESCO. (2023). Guidance for generative AI in education and research. UNESCO.

Wang, C. (2024). Exploring students’ generative AI-assisted writing processes: AI as a mediating artefact in revision activity. Educational Technology Research and Development. Advance online publication.

You must be logged in to post a comment.