Reinterpreting the Zone of Proximal Development Under Algorithmic Conditions

1. The Problem of Invisible Replacement

The incorporation of generative artificial intelligence into L2 writing instruction is typically articulated through a discourse of pedagogical enhancement: AI is said to assist, augment, scaffold, and optimise. Such terminology, while reassuring, may obscure a more consequential transformation in the architecture of learning. When AI systems can instantaneously generate explanations, restructure arguments, recalibrate register, and supply rhetorically polished reformulations, they do not merely provide support within the writing process; they increasingly perform the process itself. Under these conditions, the distinction between mediated participation and automated production becomes epistemically unstable. What appears as scaffolding may, in practice, constitute silent replacement.

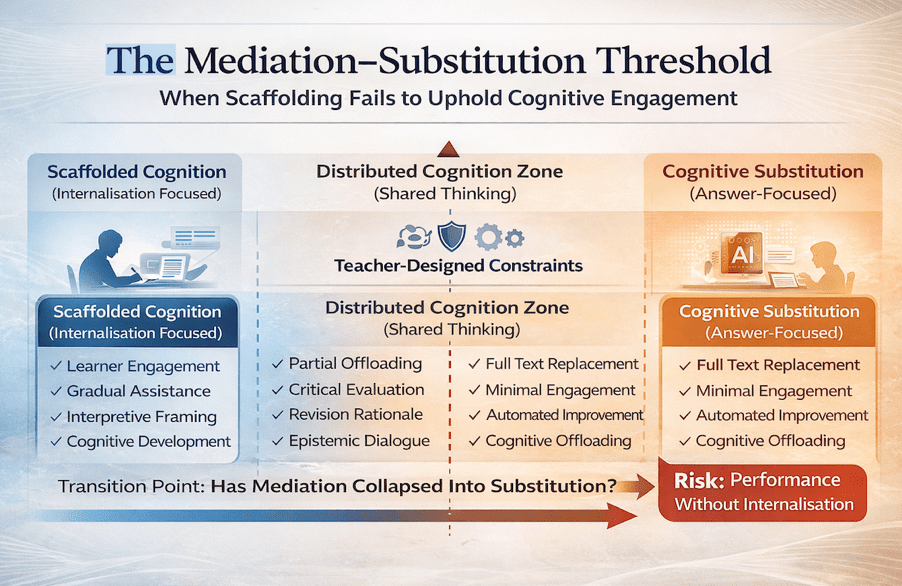

The critical issue, therefore, is not whether generative systems can facilitate writing development, but how to determine the point at which facilitation transitions into substitution. Within sociocultural theory, mediation is the engine of internalisation: learners appropriate tools and forms of reasoning through guided participation (Vygotsky, 1978). Scaffolding presupposes cognitive engagement, gradual transfer of responsibility, and the eventual consolidation of independent competence. However, when mediation becomes frictionless—when linguistic formulation, lexical retrieval, and structural decision-making are executed externally and immediately—the learner’s role risks shifting from agentive constructor to curator of pre-formed discourse. The developmental sequence that scaffolding is designed to protect may be truncated before internalisation occurs.

This is the problem of invisible replacement. The collapse is not dramatic or overt; it unfolds quietly within high-quality outputs that mask diminished generative effort. Writing appears fluent, coherent, and advanced, yet the cognitive labour that produces durable competence may have been displaced. The pedagogical danger lies precisely in this invisibility: substitution can masquerade as successful support. The task, then, is to identify and theorise the threshold at which AI-mediated assistance ceases to sustain cognitive formation and instead performs it on behalf of the learner. It is this threshold—where mediation collapses into replacement—that demands rigorous applied-linguistic scrutiny.

2. The Zone of Proximal Development in the Age of Generative AI

Vygotsky’s (1978) formulation of the Zone of Proximal Development (ZPD) has long served as a cornerstone of L2 pedagogy, anchoring instructional design in the principle that learning emerges through guided participation in tasks that exceed the learner’s current independent capacity. Within this framework, scaffolding operates as contingent, dialogic, and strategically temporary support that enables the gradual internalisation of higher-order cognitive and linguistic processes. Crucially, the ZPD is not merely a space of assisted performance; it is a developmental corridor structured around progressive transfer of responsibility. Assistance is calibrated, responsive, and ultimately withdrawn so that competence becomes self-sustaining rather than externally maintained.

Generative AI, however, disrupts the classical architecture of the ZPD in ways that demand theoretical reconsideration. In AI-mediated writing environments, assistance is not contingent but constant, not gradually reduced but perpetually available. Large language models provide complete and rhetorically sophisticated solutions rather than graduated prompts or partial cues. Unless deliberately constrained by pedagogical design, these systems do not modulate support according to developmental readiness or communicative struggle. As a result, the learner is no longer positioned at the boundary of emerging competence but rather in direct proximity to fully formed linguistic performance.

This shift produces two profound reconfigurations of the ZPD. First, the boundary between assisted and independent performance becomes epistemically blurred. When AI output can be seamlessly integrated into student writing, it becomes increasingly difficult to determine where learner competence ends and algorithmic production begins. Second, the process of internalisation—the mechanism through which mediated activity becomes cognitively owned—risks attenuation. If learners incorporate AI-generated reformulations without reconstructing the reasoning behind lexical choice, syntactic configuration, or rhetorical organisation, the mediational pathway that scaffolding is designed to cultivate may be truncated. As Kasneci et al. (2023) caution, while LLMs offer significant educational potential, they simultaneously create conditions for over-reliance when active engagement is not structurally preserved.

Under AI conditions, the ZPD can no longer be conceptualised solely as the distance between what learners can do alone and what they can accomplish with support. It must instead be reframed as a site of epistemic accountability. The central question becomes whether assistance produces cognitive residue—whether the learner internalises the operations enacted by the system—or whether performance remains externally sustained. In this reinterpreted ZPD, developmental legitimacy depends not merely on improved output, but on demonstrable transformation in underlying cognitive capacity.

3. Cognitive Offloading or Distributed Cognition?

Contemporary discussions of AI-mediated writing frequently oscillate between two interpretive poles. At one extreme lies the concern that generative systems induce cognitive atrophy through systematic over-delegation; at the other, the assertion that AI constitutes a legitimate extension of human intellectual capacity within a distributed cognitive system (Hutchins, 1995). This tension reflects a deeper theoretical question: does AI externalise cognition in ways that weaken internal capacity, or does it reorganise cognitive labour without diminishing it? The answer cannot be resolved at the level of technology alone, but must be examined through the lens of pedagogical design and learner engagement.

Cognitive offloading, as theorised in cognitive science, refers to the strategic externalisation of mental operations onto artefacts in order to reduce working memory load and processing demands (Risko & Gilbert, 2016). In L2 writing contexts, such offloading may involve delegating grammar correction, lexical retrieval, syntactic restructuring, or macro-level organisational planning to an AI system. While these practices may increase short-term efficiency and improve surface-level textual quality, they carry developmental implications. Durable learning depends on retrieval practice, generative effort, and elaborative processing—mechanisms that strengthen memory consolidation and transfer (Bjork et al., 2013). When linguistic formulation is routinely outsourced, the frequency and intensity of these strengthening operations may decline, potentially weakening long-term competence formation.

By contrast, distributed cognition reframes thinking as a systemic phenomenon that unfolds across individuals, tools, and environments (Hutchins, 1995). From this perspective, AI does not inherently replace cognition; rather, it reconfigures its locus. The learner’s cognitive task shifts from primary generation to secondary evaluation—scrutinising, selecting, adapting, and integrating AI-generated suggestions within a broader rhetorical framework. Under this model, cognition remains active, though differently organised. The intellectual work lies not in producing language ex nihilo, but in curating and critically interrogating machine-mediated proposals.

The distinction between cognitive offloading and distributed cognition is therefore not ontological but pedagogical. AI systems do not determine the nature of cognitive engagement; instructional design does. When learners are required to justify revisions, articulate metalinguistic reasoning, and reconstruct the principles underlying AI suggestions, cognitive labour remains distributed yet developmentally generative. When outputs are accepted reflexively, interpretive effort diminishes, and offloading approaches substitution. The critical variable is not whether cognition is shared with a system, but whether the learner continues to exercise epistemic agency within that system.

Accordingly, the collapse of mediation does not occur simply because AI is present. It occurs when interpretive effort disappears—when evaluation yields to automation and the cognitive operations essential for internalisation are silently displaced. The pedagogical challenge is thus to design AI-mediated environments in which distribution enhances engagement rather than dissolves it.

4. The Erosion of Productive Struggle

A foundational insight from cognitive science is that learning is strengthened, not hindered, by certain forms of difficulty. Effortful retrieval, generative processing, and elaborative engagement enhance long-term retention and transfer by deepening encoding and consolidating memory traces (Bjork et al., 2013). What has been termed “desirable difficulty” captures the paradox that productive struggle—difficulty that challenges without overwhelming—plays a constitutive role in durable competence formation. In L2 writing, this struggle is neither incidental nor peripheral; it is embedded in the act of lexical searching, syntactic experimentation, rhetorical recalibration, and iterative reformulation. It is precisely through these moments of hesitation and revision that linguistic control expands.

Generative AI, by design, reduces such friction. Where learners might previously engage in extended cognitive effort to resolve issues of phrasing, cohesion, or argument structure, AI systems now offer instant, polished alternatives. The temporal and cognitive gap between uncertainty and resolution collapses. While this may enhance immediate textual quality and increase perceptions of fluency, it also alters the distribution of cognitive labour within the writing process. When uncertainty is resolved externally and instantaneously, the opportunity for effortful retrieval and generative reconstruction is correspondingly diminished. The risk is not simply reduced difficulty, but the erosion of the developmental mechanisms that difficulty sustains.

As Luckin et al. (2022) argue, AI readiness in education requires preserving human-centred learning objectives rather than privileging automation as an end in itself. If AI resolves linguistic uncertainty too efficiently, the epistemic value of uncertainty—its role in prompting reflection, hypothesis testing, and self-correction—may be neutralised. Under such conditions, the classroom risks shifting from a site of cognitive formation to a site of performance optimisation. Writing becomes an exercise in output refinement rather than in competence construction.

This does not imply that struggle should be intensified indiscriminately or that AI must be excluded from revision practices. Rather, it suggests that pedagogical design must deliberately preserve epistemic effort within AI-mediated environments. Writing tasks should continue to require formulation before consultation, justification of revisions, and articulation of metalinguistic reasoning. Productive struggle must be scaffolded rather than eliminated. Without such structuring, mediation loses its developmental function, and the gradual internalisation of linguistic competence gives way to substitution.

5. Detecting the Threshold: When Does Scaffolding Become Substitution?

If the central pedagogical concern is the potential collapse from scaffolding into substitution, then the task becomes diagnostic as much as theoretical. The transition is rarely dramatic; it does not announce itself through visible failure. Instead, it manifests subtly within increasingly polished outputs that may conceal diminishing developmental engagement. Detecting this threshold therefore requires attention to structural indicators within classroom practice rather than reliance on surface textual quality.

A first indicator is the loss of process visibility. In traditional models of writing instruction, drafts, revisions, marginal comments, and oral explanations provide insight into learners’ evolving reasoning. These artefacts allow teachers to trace developmental trajectories and assess the relationship between assistance and internalisation. When AI systems mediate substantial portions of formulation and revision without transparent documentation of intermediate thinking, this visibility diminishes. Construct validity becomes unstable: a fluent final product can no longer be confidently interpreted as evidence of underlying competence. The epistemic link between performance and capacity weakens, and assessment risks conflating algorithmic contribution with learner development.

A second indicator concerns the attenuation of generative effort. Writing competence is reinforced through repeated acts of retrieval, syntactic assembly, and rhetorical organisation. If learners consistently rely on AI systems to perform lexical selection and structural reconfiguration, the frequency of these strengthening operations may decline. Over time, retrieval pathways that would otherwise be reinforced through practice risk remaining underdeveloped. The issue is not occasional consultation but habitual delegation. When generative formulation becomes externally sustained rather than internally reconstructed, scaffolding approaches substitution.

A third indicator is identity displacement. Writing is not merely a technical act; it is a site of authorship and epistemic positioning. If learners come to perceive AI output as categorically superior to their own linguistic production, the locus of authority may shift from self to system. This subtle recalibration of epistemic confidence can erode authorship agency, fostering dependency rather than autonomy. In such cases, substitution operates not only at the cognitive level but at the level of identity, reshaping how learners understand their legitimacy as writers.

Selwyn et al. (2025) observe that teachers increasingly find themselves managing the frailties of generative systems, including learner passivity and algorithmic normativity. This managerial labour is not peripheral but central to sustaining pedagogical integrity. AI systems do not inherently scaffold; they provide solutions. Scaffolding, by contrast, requires intentional design, calibrated constraint, and structured accountability. It must be engineered through task architecture that preserves interpretive effort and developmental transparency. The threshold between mediation and substitution, therefore, is not technologically determined but pedagogically governed. It is crossed when assistance ceases to cultivate independent capacity and instead performs it in absentia.

6. Designing for Mediated Cognition Rather Than Replacement

If generative AI is to operate as scaffold rather than substitute, pedagogical design must actively restore the epistemic friction that algorithmic systems tend to eliminate. Scaffolding is not synonymous with solution provision; it is structured assistance that sustains cognitive participation and culminates in independent capacity. In AI-mediated writing environments, this requires deliberate architectural decisions that prevent the silent transfer of linguistic labour from learner to machine. The objective is not to restrict access to AI tools per se, but to ensure that engagement with those tools remains cognitively generative rather than performatively consumptive.

One crucial strategy involves reorienting AI interaction from answer production to hypothesis generation. When learners treat AI suggestions as provisional proposals—objects of evaluation rather than definitive solutions—they remain positioned as epistemic agents. Requiring explicit justification of revisions compels learners to reconstruct the linguistic reasoning underlying adopted changes, thereby reactivating retrieval pathways and metalinguistic awareness. Similarly, limiting full-text rewrites prevents the wholesale displacement of generative formulation, preserving the necessity of initial construction before consultation. Embedding oral explanation tasks further strengthens this structure by demanding that learners articulate, in real time, the rationale for rhetorical and lexical decisions. Such practices render cognitive processes visible and restore accountability to the developmental trajectory of writing.

These design principles resonate with UNESCO’s (2023) guidance that AI integration must safeguard learner autonomy, critical judgement, and human-centred educational purposes. The teacher’s role consequently intensifies rather than diminishes. No longer confined to feedback provision, the teacher becomes a curator of epistemic participation, responsible for structuring the conditions under which AI engagement enhances rather than bypasses cognitive effort. This involves continuous calibration: identifying when assistance extends competence and when it risks supplanting it.

Under such pedagogical conditions, mediation regains its defining characteristics. It becomes observable, justified, and progressively internalised. The learner remains the locus of authorship and cognitive growth, even within an environment of technological abundance. Absent these structuring mechanisms, however, the developmental corridor of the ZPD narrows. What appears as support may instead crystallise into outsourced performance, where fluency is achieved without formation and competence remains externally sustained rather than internally consolidated.

7. Conclusion: Preserving Development Under Conditions of Abundance

Generative AI does not, in and of itself, dismantle the foundations of learning. What it disrupts is the economy of effort upon which much cognitive development depends. By dramatically reducing the friction historically embedded in writing—lexical searching, syntactic experimentation, rhetorical recalibration—AI systems alter the balance between production and formation. The pedagogical risk does not lie in automation as such, but in invisible replacement: the gradual and often undetected transfer of generative cognitive operations from learner to machine without corresponding engagement in interpretive reconstruction. Under such conditions, textual fluency may increase even as internal capacity stagnates.

Scaffolding, properly understood, is not synonymous with assistance. It is assistance structured toward internalisation. Its defining feature is not that it helps, but that it gradually renders itself unnecessary by cultivating independent competence. In AI-mediated environments, the presence of support is constant; what varies is its developmental orientation. When AI engagement is organised around reflection, justification, and the deliberate reconstruction of linguistic reasoning, mediation remains intact. The learner continues to exercise epistemic agency, and cognitive residue accumulates through interpretive effort. When these processes are bypassed—when suggestions are adopted without scrutiny and formulation is consistently outsourced—substitution supplants scaffolding.

The future of AI-mediated L2 writing pedagogy hinges on preserving this distinction with conceptual clarity and instructional precision. Abundance of fluent language does not guarantee development. What sustains growth is engagement in the intellectual labour through which language is appropriated, reorganised, and ultimately owned. The challenge, therefore, is not to restrict technological capacity, but to ensure that such capacity remains developmentally generative rather than cognitively displacing. In environments of unprecedented algorithmic abundance, the preservation of human formation becomes the central pedagogical task.

References

Bjork, R. A., Dunlosky, J., & Kornell, N. (2013). Self-regulated learning: Beliefs, techniques, and illusions. Annual Review of Psychology, 64, 417–444.

Hutchins, E. (1995). Cognition in the wild. MIT Press.

Kasneci, E., Sessler, K., Küchemann, S., Bannert, M., Dementieva, D., Fischer, F., et al. (2023). ChatGPT for good? On opportunities and challenges of large language models for education. Learning and Individual Differences, 103, 102274.

Luckin, R., Holmes, W., Griffiths, M., & Forcier, L. B. (2022). Empowering educators to be AI-ready. Computers and Education: Artificial Intelligence, 3, 100080.

Risko, E. F., & Gilbert, S. J. (2016). Cognitive offloading. Trends in Cognitive Sciences, 20(9), 676–688.

Selwyn, N., Ljungqvist, M., & Sonesson, A. (2025). When the prompting stops: Exploring teachers’ work around the educational frailties of generative AI tools. Learning, Media and Technology. Advance online publication.

UNESCO. (2023). Guidance for generative AI in education and research. UNESCO.

Vygotsky, L. S. (1978). Mind in society: The development of higher psychological processes. Harvard University Press.

You must be logged in to post a comment.