Do Large Language Models Flatten Rhetorical Diversity in EFL Writing?

1. From Fluency to Norm Production

The rapid adoption of large language models (LLMs) in L2 writing pedagogy has largely been framed as a technological enhancement of feedback efficiency and linguistic precision. Yet such framings underestimate the epistemic function of generative systems. LLMs do not merely generate improved language; they operationalise statistical centrality as normative legitimacy. In doing so, they participate in the production of linguistic value.

Language, particularly in academic writing, is never neutral. It is embedded within hierarchies of prestige, institutional gatekeeping, and historically sedimented rhetorical conventions. When LLMs are deployed in EFL classrooms, they bring with them the statistical imprint of dominant Anglophone discourse practices. The result is not simply correction but convergence. What appears as optimisation may in fact be normalisation.

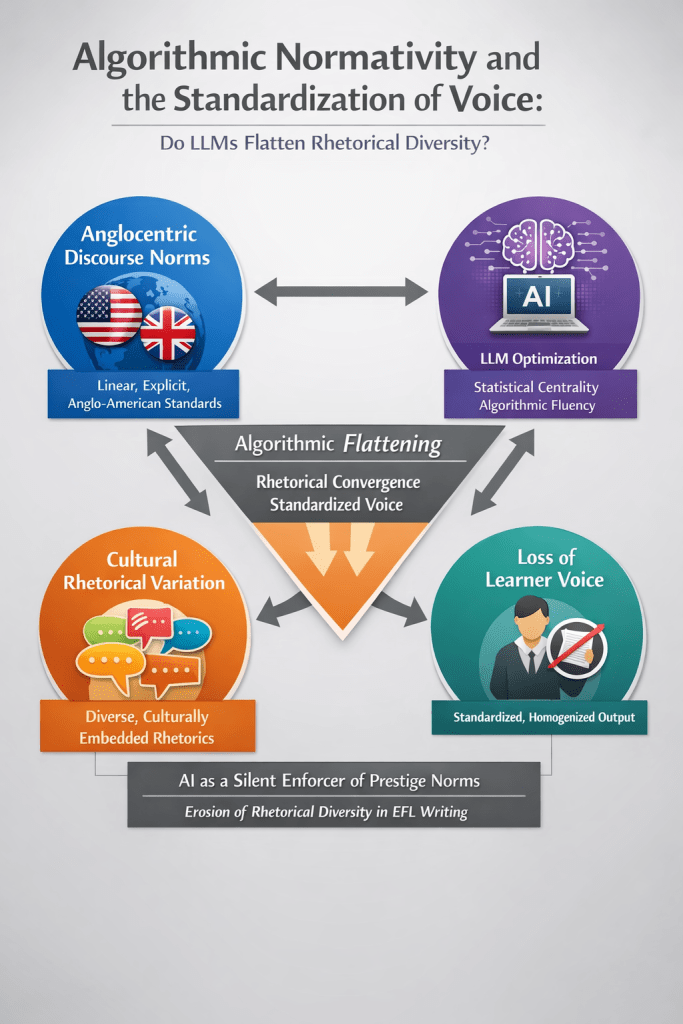

The central argument of this article is that LLM-mediated writing feedback risks accelerating a process of algorithmic rhetorical convergence, whereby diverse rhetorical traditions are gradually standardised toward high-frequency Anglo-American academic norms. This convergence is neither intentional nor explicit. It is structural, probabilistic, and often invisible. Yet its pedagogical implications are profound.

2. Algorithmic Normativity: When Probability Becomes Legitimacy

LLMs generate output by predicting the most statistically probable continuation of a given textual prompt. However, probability is not a neutral metric. It reflects frequency patterns embedded within training data, which are disproportionately drawn from English-dominant, institutionally produced, and digitally visible corpora (Bender et al., 2021). As such, LLM outputs represent an aggregated centre of gravity within global English discourse.

This statistical centrality easily acquires normative authority in pedagogical contexts. Learners encountering AI-suggested revisions are presented with outputs that appear fluent, coherent, and stylistically refined. The system does not explicitly claim superiority, yet its fluency functions rhetorically as evidence of correctness. Over time, this dynamic may recalibrate learners’ evaluative frameworks: what is statistically dominant is internalised as what is rhetorically legitimate.

This process can be conceptualised as algorithmic normativity — the transformation of high-frequency linguistic patterns into de facto standards through probabilistic modelling. Unlike prescriptive grammar rules, algorithmic norms are implicit. They are embedded in suggestions that “improve clarity,” “enhance coherence,” or “strengthen argumentation.” Yet beneath these surface-level adjustments lies a systematic orientation toward discourse centrality.

Crucially, algorithmic normativity differs from traditional standardisation mechanisms because it operates at scale and with apparent neutrality. It does not argue for Anglo-American academic style; it simply produces it repeatedly. In doing so, it naturalises particular rhetorical forms while rendering others statistically peripheral.

3. Anglocentric Discourse and the Reproduction of Linguistic Capital

English as a global academic language has long been entangled with questions of linguistic imperialism and epistemic dominance (Phillipson, 1992). Academic writing conventions — linear thesis articulation, explicit argument mapping, calibrated hedging, individualised stance — are historically situated within Anglo-American scholarly traditions (Hyland, 2005). Yet these conventions have become institutionalised as universal standards in many global education systems.

When LLMs reproduce these conventions at scale, they amplify existing asymmetries of linguistic capital. Bourdieu’s (1991) theory of symbolic power provides a useful analytical lens here: linguistic forms accrue value not because they are inherently superior, but because they are recognised as legitimate within dominant institutions. LLM outputs disproportionately reproduce these high-capital forms because they are overrepresented in training corpora.

In EFL contexts, where learners are already navigating asymmetrical linguistic hierarchies, AI-mediated feedback may intensify pressures toward conformity. Stylistic revisions frequently compress sentences, foreground explicit thesis statements, increase metadiscursive signalling, and recalibrate stance toward Anglo-academic norms. While these adjustments may enhance alignment with certain academic expectations, they also reinforce the idea that legitimacy lies in convergence.

The risk is not that learners adopt widely accepted conventions. The risk is that alternative rhetorical logics become delegitimised by omission. When AI consistently privileges one discursive centre, it narrows the perceived horizon of acceptable expression.

4. Cultural Rhetorical Variation and the Erasure of Discursive Plurality

Contrastive rhetoric and intercultural rhetoric scholarship have long demonstrated that rhetorical organisation varies across educational traditions (Connor, 2002; Kubota & Lehner, 2004). Argumentation may unfold inductively rather than deductively; thesis statements may be implied rather than foregrounded; stance may be collectivist rather than individualised. Such patterns are not deficits but culturally embedded communicative strategies.

LLMs, however, are not trained to recognise rhetorical legitimacy in pluralistic terms. They are optimised to predict probable continuations based on corpus frequency. As a result, culturally inflected discourse patterns may be statistically marginal and therefore treated as candidates for refinement. When AI systems “clarify” implicit thesis positioning or restructure inductive reasoning into deductive form, they are not merely improving coherence; they are aligning texts with dominant statistical norms.

This dynamic risks transforming rhetorical variation into perceived inadequacy. Learners may internalise the message that deviation from Anglo-American linearity signals weakness rather than difference. Over time, such iterative micro-adjustments may produce macro-level convergence.

Importantly, this process does not require explicit ideological intent. It emerges from the interaction between corpus bias and pedagogical uptake. The flattening of rhetorical diversity is thus an emergent property of probabilistic modelling rather than a deliberate imposition. Yet its consequences are pedagogically significant.

5. Stylistic Optimisation and the Gradual Loss of Voice

Voice in L2 writing encompasses stance, identity positioning, evaluative alignment, and rhetorical presence (Hyland, 2008). It is developed through iterative negotiation between intention and expression. Generative AI systems, however, are optimised for stylistic smoothing and lexical enhancement. Their revisions often reduce redundancy, tighten argumentation, and recalibrate tone toward academic formality.

While such optimisation may improve surface fluency, it can also attenuate idiosyncratic expression. When learners repeatedly accept AI-generated reformulations, subtle shifts accumulate. Lexical uniqueness may give way to high-frequency collocations; tentative or culturally nuanced phrasing may be replaced by standardised metadiscourse. The resulting text may appear more polished but less individually situated.

UNESCO (2023) emphasises that AI integration in education must safeguard cultural diversity and learner agency. Yet safeguarding voice requires more than ensuring grammatical accuracy; it requires recognising that stylistic optimisation is not neutral. It encodes assumptions about what counts as authoritative expression.

If learners begin to equate “better writing” with alignment to algorithmic centrality, authorship agency may be gradually redefined. Voice becomes something to be calibrated toward statistical norm rather than cultivated as rhetorical positioning. In such contexts, optimisation risks becoming homogenisation.

6. AI as a Silent Enforcer of Prestige Norms

Unlike traditional prescriptive mechanisms, algorithmic normativity operates without explicit prescription. There is no rulebook mandating Anglo-American linearity. Instead, prestige forms emerge through repetition. Each suggested revision that increases explicitness, tightens structure, or formalises tone incrementally reorients the learner toward dominant discourse.

Selwyn (2023) argues that AI systems must be understood as sociotechnical actors embedded within existing power relations. In L2 writing classrooms, this embedding manifests as the reproduction of global hierarchies of linguistic capital. The system appears neutral because it is computational. Yet computational neutrality does not equate to sociolinguistic neutrality.

The silent enforcement lies precisely in invisibility. Because AI-generated revisions are framed as enhancements rather than corrections of cultural variation, they rarely trigger critical reflection. The algorithm does not say, “This rhetorical pattern is culturally marginal.” It simply offers an alternative that appears more polished.

Over time, such iterations may normalise convergence as the default trajectory of improvement. Diversity becomes statistically peripheral; centrality becomes pedagogically aspirational.

7. Preserving Rhetorical Plurality in AI-Mediated Classrooms

If LLMs are to function as pedagogical resources rather than norm-amplifying filters, rhetorical plurality must be explicitly foregrounded. Teachers must render visible the probabilistic basis of AI suggestions and contextualise stylistic revisions within broader intercultural frameworks.

This may involve critical comparison between original drafts and AI rewrites, metadiscursive discussion of rhetorical traditions, and structured reflection on voice preservation. Such practices reposition AI as a negotiable interlocutor rather than an authoritative arbiter of legitimacy.

Ultimately, the question is not whether AI-generated English is fluent. It is whether fluency is being conflated with universality. In multilingual writing development, universality is neither achievable nor desirable. Competence entails adaptability across rhetorical contexts, not assimilation into a singular statistical centre.

8. Conclusion: Safeguarding Rhetorical Plurality Under Algorithmic Mediation

Large language models do not operate with intentional ideological agendas, nor do they consciously seek to erase rhetorical diversity. However, intentionality is not the relevant analytical variable. Through probabilistic modelling of high-frequency textual patterns drawn from disproportionately Anglophone and institutionally dominant corpora, LLMs reproduce the statistical centre of global English discourse. When deployed at scale in EFL writing classrooms, this statistical centre can gradually acquire normative force. What is most frequent becomes what is most fluent; what is most fluent becomes what appears most legitimate. In this way, convergence toward Anglocentric discourse norms may be accelerated not by explicit prescription, but by iterative optimisation.

In EFL contexts already structured by asymmetries of linguistic capital, this convergence has cumulative effects. Learners navigating global academic spaces often experience implicit pressures to align with dominant rhetorical expectations in order to secure institutional legitimacy. When AI systems consistently recalibrate texts toward Anglo-American linearity, explicitness, and stance conventions, they may amplify these pressures. Over time, the repeated alignment of “improvement” with statistical centrality can subtly recalibrate learners’ internal standards of adequacy. Legitimacy becomes equated with convergence; deviation becomes tacitly framed as deficit. This recalibration does not occur through overt correction of cultural difference, but through the steady normalisation of a narrow rhetorical centre.

The pedagogical imperative, therefore, is not technological rejection but epistemic vigilance. AI-mediated feedback must be critically mediated rather than passively integrated. Educators must make visible the probabilistic foundations of algorithmic suggestions and explicitly distinguish between communicative effectiveness and conformity to dominant norms. Fluency must not be conflated with universality, nor optimisation mistaken for neutral enhancement. A text may be statistically polished while rhetorically situated in ways that merit preservation rather than smoothing.

If rhetorical diversity is to remain a defining feature of global English, generative systems must be positioned as dialogic interlocutors rather than silent arbiters of legitimacy. This requires pedagogical practices that foreground multiplicity, encourage comparative rhetorical analysis, and validate culturally embedded discourse strategies alongside dominant academic conventions. The goal is not to resist global intelligibility, but to ensure that intelligibility does not collapse into homogenisation.

In the age of algorithmic mediation, safeguarding voice extends beyond classroom technique; it becomes a scholarly and ethical responsibility. Applied linguistics has long interrogated the politics of standardisation and the circulation of linguistic capital. The integration of generative AI into writing pedagogy demands that this critical tradition be sustained rather than sidelined. If LLMs are to serve multilingual writers rather than silently reshape them, educators and researchers must ensure that algorithmic fluency enhances expressive range rather than narrowing it.

References

Bender, E. M., Gebru, T., McMillan-Major, A., & Shmitchell, S. (2021). On the dangers of stochastic parrots: Can language models be too big? Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, 610–623.

Bourdieu, P. (1991). Language and symbolic power. Harvard University Press.

Connor, U. (2002). New directions in contrastive rhetoric. TESOL Quarterly, 36(4), 493–510.

Hyland, K. (2005). Metadiscourse: Exploring interaction in writing. Continuum.

Hyland, K. (2008). Voice in second language writing. Annual Review of Applied Linguistics, 28, 5–22.

Kubota, R., & Lehner, A. (2004). Toward critical contrastive rhetoric. Journal of Second Language Writing, 13(1), 7–27.

Phillipson, R. (1992). Linguistic imperialism. Oxford University Press.

Selwyn, N. (2023). Should robots replace teachers? AI and the future of education. Polity Press.

UNESCO. (2023). Guidance for generative AI in education and research. UNESCO.

You must be logged in to post a comment.