Redesigning Writing Evaluation Around Traceable Thinking

1. Introduction: From Product Authenticity to Process Legibility

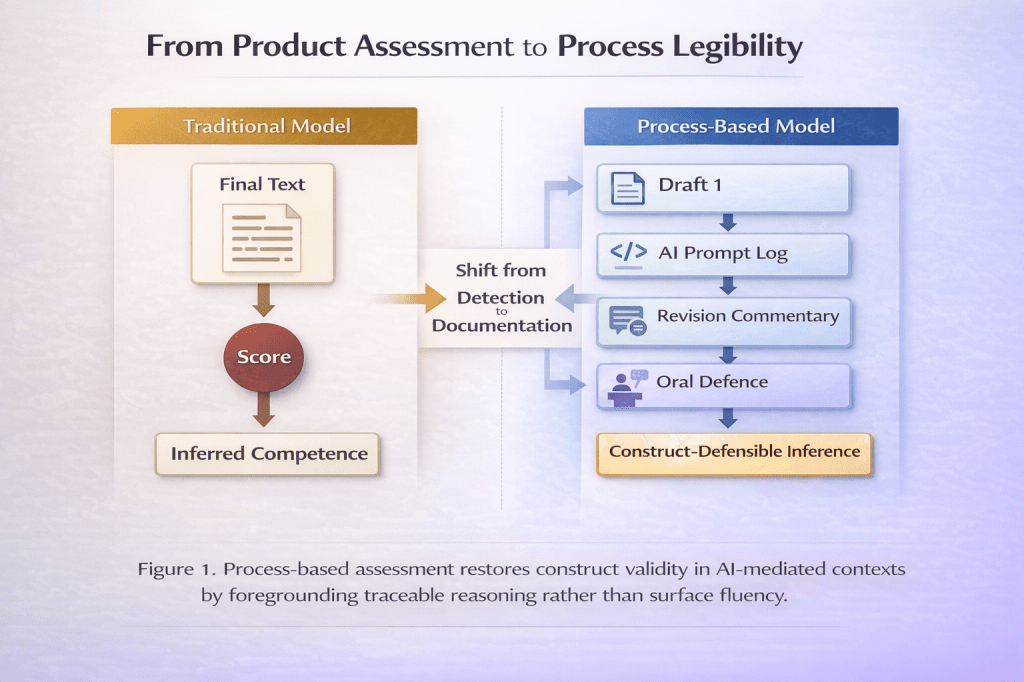

The proliferation of generative AI in writing contexts has rendered traditional product-based assessment epistemically unstable. When fluent texts can be algorithmically generated, the central concern shifts from authorship detection to construct defensibility. Attempts to police AI use through detection technologies have proven both unreliable and ethically problematic (Perkins et al., 2023). The more urgent question is not how to identify AI-generated text, but how to redesign assessment so that it captures traceable thinking rather than surface fluency.

Process-based assessment offers a principled alternative. Rather than equating writing ability with the polished final product, process-oriented approaches foreground revision trajectories, decision-making rationales, and metacognitive articulation (Hyland, 2019). In AI-mediated environments, such process visibility becomes a condition of validity. If assessment continues to reward textual polish alone, it risks certifying simulated proficiency. If, however, it captures the evolution of thought, justification of revisions, and strategic use of AI prompts, it may restore epistemic transparency without resorting to surveillance.

This article argues that revision logs, prompt histories, and oral defence components can be integrated into writing assessment as mechanisms of accountability that preserve learner agency. Such redesign aligns pedagogy with policy by shifting from detection to documentation.

2. Theoretical Foundations: Writing as Process and Construct Representation

Writing has long been theorised as recursive, developmental, and socially mediated (Flower & Hayes, 1981; Hyland, 2019). Process approaches emphasise drafting, feedback cycles, and revision as sites of learning rather than as peripheral stages preceding the “real” product. However, high-stakes assessment regimes have historically prioritised final outputs due to scalability and reliability constraints (Weigle, 2002).

Under AI conditions, this product emphasis becomes untenable. If writing constructs are intended to capture lexical control, rhetorical organisation, and argumentative reasoning, then observable process traces may provide more defensible evidence than the final text alone. Messick’s (1989) unified theory of validity reminds us that construct representation must align with intended inferences. When the construct includes reasoning, planning, and revision competence, assessment instruments must capture those dimensions directly.

Process-based assessment thus becomes not a pedagogical preference but a validity imperative. It shifts the locus of inference from what the text looks like to how the text came into being.

3. Process Portfolios as Evidence of Cognitive Trajectory

One mechanism for operationalising traceable thinking is the process portfolio. Rather than submitting a single essay, learners submit staged drafts accompanied by revision commentaries and decision rationales. These portfolios make visible the evolution of ideas, the incorporation or rejection of feedback, and the development of structural coherence.

In AI-mediated contexts, portfolios can include annotated prompt histories and reflective commentary explaining how AI suggestions were evaluated. This does not require banning AI; it requires contextualising it. The learner’s competence is evidenced not by abstention from AI but by critical engagement with it.

Research on formative feedback and metacognitive reflection suggests that explicit articulation of revision decisions enhances learning transfer and self-regulation (Carless & Boud, 2018). When integrated into assessment, such articulation also strengthens construct validity. The assessor evaluates not only textual accuracy but epistemic responsibility.

Process portfolios therefore transform AI from a hidden variable into an examinable component of writing practice.

4. Oral Defence Components and Epistemic Accountability

A second mechanism involves incorporating oral defence elements into writing assessment. Oral defence has long been used in doctoral examinations as a means of verifying authorship and conceptual ownership. Adapted appropriately, it can serve as a complementary validation mechanism in AI-mediated undergraduate and postgraduate contexts.

In practice, learners may be asked to explain key argumentative decisions, justify structural revisions, or elaborate on counter-arguments. The aim is not to interrogate authenticity punitively but to assess alignment between written claims and conceptual understanding. Kane’s (2006) argument-based validation framework underscores the need for coherent inferential chains. Oral components provide an additional evidential link between product and competence.

Importantly, such practices must be pedagogically framed as opportunities for metacognitive articulation rather than as suspicion-driven checks. When designed constructively, oral defence reinforces epistemic agency and discourages passive reliance on algorithmic outputs.

5. Prompt Transparency Policies: Accountability Without Surveillance

The integration of prompt transparency policies offers a third dimension of process-based assessment. Rather than prohibiting AI use or relying on detection software, institutions can require disclosure of AI assistance within defined parameters. This approach aligns with UNESCO’s (2023) guidance advocating human-centred governance of generative AI in education.

Prompt transparency involves documenting the nature and purpose of AI interactions: Was the tool used for brainstorming? For grammatical refinement? For structural reorganisation? Such documentation situates AI use within a pedagogical narrative rather than framing it as misconduct.

However, transparency must avoid becoming surveillance. Excessive monitoring of digital traces risks undermining trust and infringing privacy. Selwyn (2023) cautions that educational AI governance must balance accountability with learner rights. Process documentation should therefore be limited to what is pedagogically relevant and voluntarily disclosed within assessment criteria.

The objective is not to track every keystroke, but to render reasoning legible.

6. Reframing Accountability: From Detection to Documentation

The prevailing policy discourse often centres on detection technologies designed to identify AI-generated text. Yet empirical analyses indicate that AI detection tools exhibit high false-positive rates and disproportionately affect non-native speakers (Perkins et al., 2023). Detection thus introduces construct-irrelevant variance and potential inequity.

Documentation-based approaches offer a more defensible alternative. By requiring learners to evidence revision pathways, justify AI use, and articulate decision-making processes, assessment shifts from suspicion to substantiation. Accountability becomes developmental rather than punitive.

This reframing also aligns with sociocultural models of mediation. Writing competence is not an isolated cognitive event but an interaction between tools, social context, and reflective agency. Making that interaction visible preserves both academic integrity and pedagogical coherence.

7. Policy Implications: Aligning Governance and Pedagogy

For institutions, process-based assessment requires policy recalibration. Academic integrity guidelines must move beyond binary distinctions between permitted and prohibited AI use. Instead, policies should articulate acceptable forms of assistance, required documentation standards, and assessment criteria that prioritise epistemic transparency.

At programme level, modules on academic writing and teacher education should incorporate training on revision logs, reflective commentaries, and AI prompt documentation. Such integration ensures coherence between policy expectations and classroom practice.

By aligning governance structures with pedagogical design, institutions can foster cultures of responsible AI engagement rather than adversarial enforcement.

8. Conclusion: Assessing Thinking in the Age of Fluent Machines

Generative AI challenges not only authorship norms but the epistemology of writing assessment. If fluent products can be externally generated, assessment must shift toward traceable thinking. Revision logs, process portfolios, oral defence components, and prompt transparency policies offer principled mechanisms for preserving construct validity without resorting to surveillance.

The future of writing assessment lies not in policing outputs but in documenting reasoning. When thinking becomes visible, competence becomes defensible.

References

Carless, D., & Boud, D. (2018). The development of student feedback literacy: Enabling uptake of feedback. Assessment & Evaluation in Higher Education, 43(8), 1315–1325.

Flower, L., & Hayes, J. R. (1981). A cognitive process theory of writing. College Composition and Communication, 32(4), 365–387.

Hyland, K. (2019). Second language writing (2nd ed.). Cambridge University Press.

Kane, M. (2006). Validation. In R. L. Brennan (Ed.), Educational measurement (4th ed., pp. 17–64). Praeger.

Messick, S. (1989). Validity. In R. L. Linn (Ed.), Educational measurement (3rd ed., pp. 13–103). Macmillan.

Perkins, M., Furze, L., Roe, J., & MacVaugh, J. (2023). The rise and fall of AI detection tools in education: False positives and fairness concerns. Journal of Academic Ethics, 21, 1–15.

Selwyn, N. (2023). Should robots replace teachers? AI and the future of education. Polity Press.

UNESCO. (2023). Guidance for generative AI in education and research. UNESCO.

You must be logged in to post a comment.