Re-Theorising Professional Competence Under Conditions of Algorithmic Mediation

1. Introduction: Beyond Instrumental AI Literacy

Calls for “AI literacy” in teacher education have proliferated rapidly following the emergence of generative large language models (LLMs). However, much of the discourse remains instrumentally framed: teacher trainees are expected to learn how to use AI tools, detect AI-generated text, or incorporate automated feedback into lesson planning. Such framings are pedagogically insufficient and theoretically underdeveloped. They treat AI as a technological add-on rather than as an epistemic reconfiguration of language pedagogy.

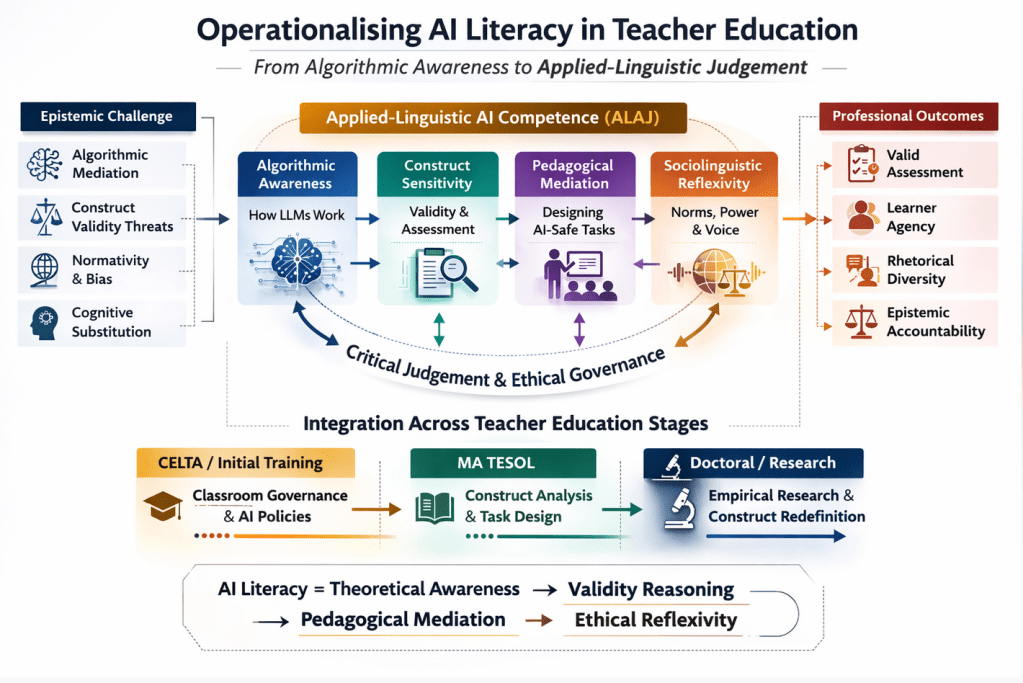

The integration of generative AI into writing classrooms does not merely introduce new tools; it destabilises foundational assumptions about authorship, assessment, construct validity, rhetorical normativity, and learner agency. If teacher education is to respond adequately, AI literacy must be reconceptualised not as technical competence but as applied-linguistic judgement. The central argument of this article is that AI literacy in teacher education should be operationalised as a theoretically grounded construct encompassing algorithmic awareness, construct sensitivity, pedagogical mediation, and sociolinguistic reflexivity. Without such operationalisation, AI integration risks becoming epistemically naïve and pedagogically incoherent.

2. From Digital Literacy to Algorithmic Judgement

Digital literacy frameworks have historically emphasised access, evaluation of online sources, multimodal communication, and critical consumption of digital content. Generative AI, however, introduces a qualitatively different challenge. It does not merely provide information; it produces language that simulates competence. The teacher is therefore confronted not with issues of source credibility alone, but with questions about the nature of writing ability, the integrity of assessment constructs, and the distribution of cognitive labour between human and machine.

Bender et al. (2021) remind us that large language models are probabilistic systems trained on massive corpora reflecting existing sociolinguistic hierarchies. Their outputs are neither neutral nor epistemically transparent. Selwyn (2023) further argues that AI in education must be understood as a sociotechnical actor embedded in institutional power relations rather than as a neutral productivity enhancer. These perspectives compel a shift from instrumental AI literacy to what may be termed algorithmic judgement: the professional capacity to interrogate how generative systems reshape pedagogical, epistemic, and ethical landscapes.

Algorithmic judgement requires teachers to understand not simply what AI can do, but what it does to constructs of writing ability, to learner identity, and to institutional evaluation systems. It demands theoretical integration rather than tool experimentation.

3. Construct Sensitivity as Core Professional Competence

One of the most under-theorised aspects of AI literacy is construct awareness. Writing assessment rests on defensible inferences linking textual performance to underlying competence (Messick, 1989; Kane, 2006). When generative systems contribute to textual production, the inferential logic underpinning assessment is destabilised. Teachers who lack construct sensitivity may unknowingly validate AI-augmented performance as independent competence, thereby distorting assessment decisions.

Operationalising AI literacy must therefore include the capacity to articulate and defend validity arguments under AI-mediated conditions. This involves recognising threats such as construct-irrelevant variance introduced by algorithmic augmentation and identifying conditions under which AI use may mask rather than reveal linguistic competence. It also requires teachers to distinguish between independent proficiency and AI-supported performance when designing tasks and evaluating outcomes.

In teacher education, construct sensitivity should not remain confined to specialist assessment modules. It must become a cross-cutting competence embedded in writing pedagogy, curriculum design, and feedback practices. Without such integration, AI literacy remains superficial.

4. Pedagogical Mediation Under Algorithmic Conditions

If AI systems can generate fluent texts, the teacher’s role shifts from knowledge provider to epistemic mediator. This mediation involves structuring classroom practices in ways that preserve cognitive engagement, productive struggle, and learner agency despite the availability of algorithmic assistance. Operationalising AI literacy thus entails equipping teacher trainees with the capacity to design tasks that prevent substitution while enabling meaningful support.

Luckin et al. (2022) emphasise that AI readiness in education must foreground human-centred design principles. Applied to language teaching, this means creating instructional architectures that maintain interpretive effort. Teachers must be able to justify when AI use is pedagogically defensible, when it undermines developmental goals, and how to scaffold critical engagement with AI outputs.

Such competence cannot be developed through ad hoc workshops. It requires sustained engagement with theoretical models of scaffolding, sociocultural mediation, and cognitive load. Teacher education programmes must therefore integrate AI considerations into modules on writing development, formative feedback, and learner autonomy rather than isolating them in discrete technology sessions.

5. Sociolinguistic Reflexivity and Algorithmic Normativity

Generative AI systems disproportionately reproduce dominant Anglocentric discourse norms embedded in their training data (Bender et al., 2021). In EFL contexts, this probabilistic bias may reinforce linguistic hierarchies and accelerate rhetorical convergence toward prestige varieties. Teachers who lack sociolinguistic reflexivity may inadvertently validate algorithmically standardised voice as universally superior.

Operational AI literacy must therefore include awareness of algorithmic normativity. Drawing on Bourdieu’s (1991) concept of linguistic capital, teacher trainees should be able to analyse how AI-mediated feedback may amplify dominant discourse forms and marginalise culturally situated rhetorical practices. This competence extends beyond ethical caution; it becomes a matter of pedagogical responsibility.

Teacher education programmes must cultivate the capacity to interrogate whose English is being reproduced, whose rhetorical traditions are being privileged, and how learners’ voices are shaped under algorithmic optimisation. Without such reflexivity, AI literacy collapses into technocratic accommodation.

6. Designing Syllabi: From Add-On to Structural Integration

To operationalise AI literacy meaningfully, it must be structurally embedded across stages of teacher education. In initial teacher training contexts such as CELTA, emphasis should be placed on classroom governance under AI conditions, transparency in policy, and maintaining learner production. At MA level, programmes should engage trainees in critical analysis of AI-mediated feedback, validity considerations, and design-based evaluation of AI-supported tasks. At doctoral level, AI literacy evolves into research competence: the ability to design empirical studies on AI-mediated writing development and contribute to theoretical reconceptualisations of writing constructs.

What unifies these levels is not tool proficiency but theoretical integration. AI literacy becomes cumulative: from awareness to analysis to epistemic contribution. It must be assessed through tasks that require validity reasoning, pedagogical justification, and critical sociolinguistic positioning rather than through demonstrations of technical usage.

7. From Literacy to Professional Accountability

The operationalisation of AI literacy in teacher education represents a shift from technological adaptation to professional accountability. Teachers in AI-mediated classrooms are no longer merely facilitators of language learning; they are mediators of algorithmic authority. Their decisions shape how learners interpret AI feedback, how constructs of writing ability evolve, and how linguistic norms are reproduced.

If teacher education fails to redefine AI literacy as applied-linguistic judgement, it risks producing practitioners who are technically competent but theoretically unprepared. In such contexts, AI becomes an unexamined authority rather than a critically governed resource.

Operationalising AI literacy is therefore not an optional curricular enhancement. It is a structural response to a transformation in the epistemology of writing pedagogy. In the age of generative systems, professional competence must include the capacity to govern, interrogate, and theoretically situate algorithmic mediation within language education.

References

Bender, E. M., Gebru, T., McMillan-Major, A., & Shmitchell, S. (2021). On the dangers of stochastic parrots: Can language models be too big? Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, 610–623.

Bourdieu, P. (1991). Language and symbolic power. Harvard University Press.

Kane, M. (2006). Validation. In R. L. Brennan (Ed.), Educational measurement (4th ed., pp. 17–64). Praeger.

Luckin, R., Holmes, W., Griffiths, M., & Forcier, L. B. (2022). AI readiness in education. Computers and Education: Artificial Intelligence, 3, 100080.

Messick, S. (1989). Validity. In R. L. Linn (Ed.), Educational measurement (3rd ed., pp. 13–103). Macmillan.

Selwyn, N. (2023). Should robots replace teachers? AI and the future of education. Polity Press.

UNESCO. (2023). Guidance for generative AI in education and research. UNESCO.

You must be logged in to post a comment.